Building AI systems is easy.

Building governed AI infrastructure is the hard part.

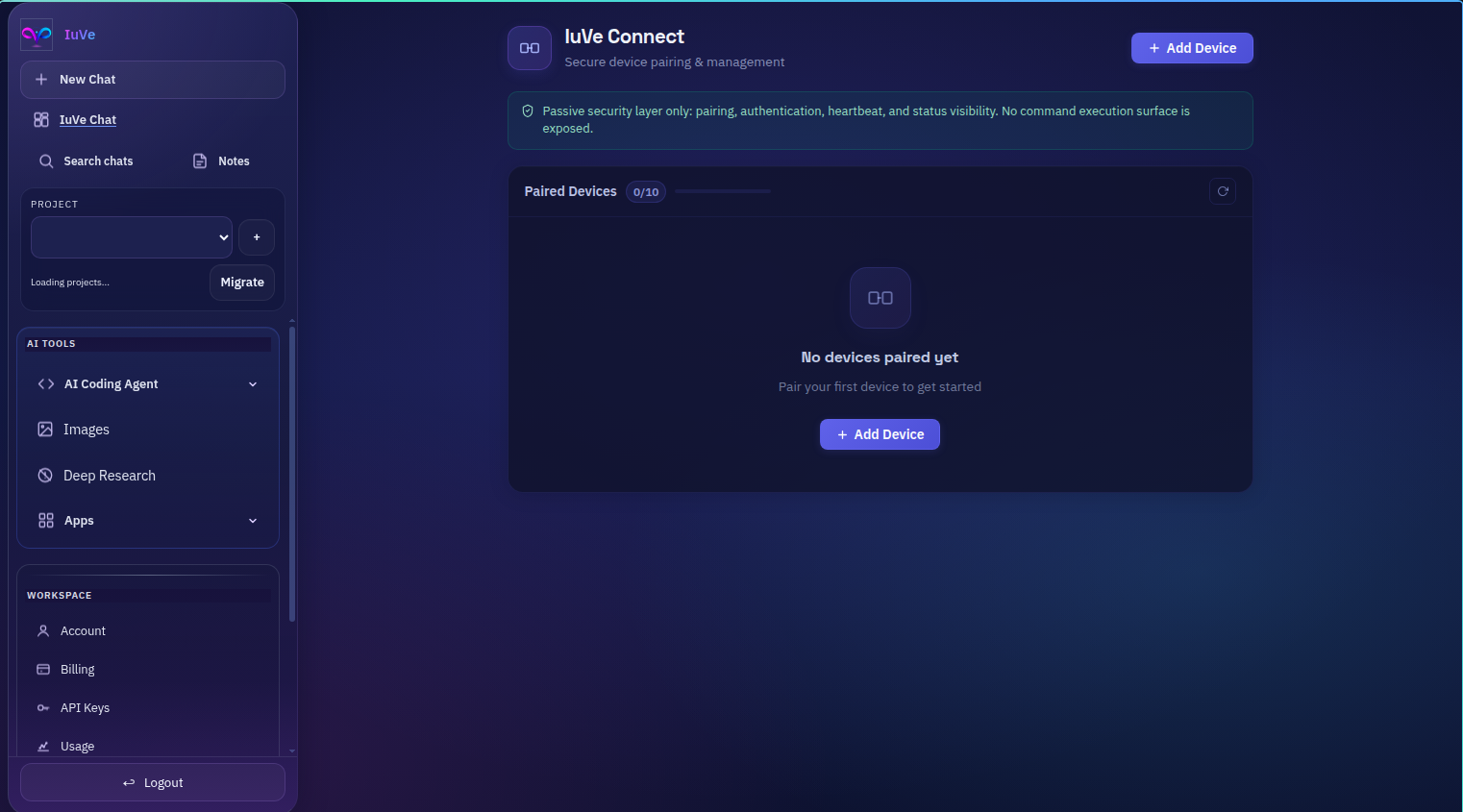

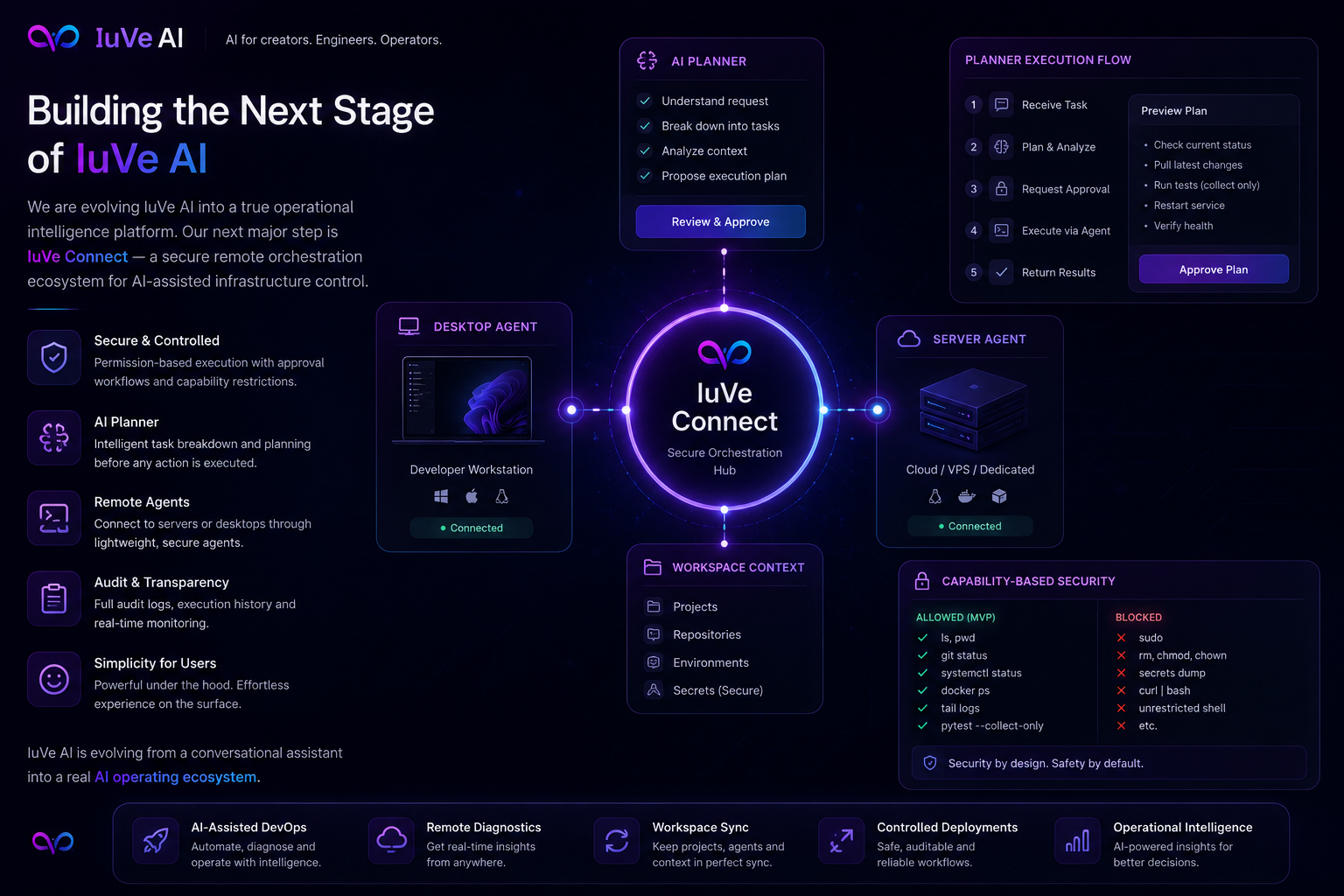

Over the last phase of IuVe Connect development, we made a deliberate architectural decision:

Not to build an “all-powerful AI agent” inside the IDE.

But instead to build a **passive, governed trust runtime**.

That distinction changed everything.

Instead of rushing into:

* shell execution,

* repository mutation,

* terminal orchestration,

* autonomous workflows,

we focused on:

* canonical architecture,

* anti-duplication governance,

* reconnect lifecycle integrity,

* trust-state management,

* rollback discipline,

* passive-only enforcement,

* operational evidence,

* compliance validation.

One of the biggest discoveries during the audit phase was that the real danger wasn’t lack of features.

It was entropy.

Duplicate runtimes.

Duplicate reconnect logic.

Legacy entrypoints.

Fragmented storage models.

Experimental drift.

So before scaling the plugin ecosystem, we paused and built:

* a Canonical System Blueprint,

* a Compliance Gate framework,

* a Shared-Core plugin runtime,

* and strict passive-only enforcement boundaries.

The result:

A unified VS Code / Cursor plugin architecture with:

* single reconnect lifecycle,

* single heartbeat lifecycle,

* single trust-state model,

* single storage authority,

* compliance validation,

* migration checkpoints,

* isolated lifecycle testing,

* revoke/offline/degraded-state validation.

Most importantly:

the system remains intentionally non-autonomous.

No hidden subprocesses.

No shell execution.

No workspace mutation.

No repo scanning.

No terminal control.

Just a governed trust-connected runtime designed for long-term operational integrity.

This phase reinforced an important engineering lesson:

Feature velocity without governance eventually becomes operational entropy.

And in AI infrastructure, entropy compounds fast.

#AI #Architecture #PlatformEngineering #DevTools #Governance #SoftwareArchitecture #VSCode #Cursor #Engineering #CyberSecurity #Infrastructure #IuVeAI

Building governed AI infrastructure is the hard part.

Over the last phase of IuVe Connect development, we made a deliberate architectural decision:

Not to build an “all-powerful AI agent” inside the IDE.

But instead to build a **passive, governed trust runtime**.

That distinction changed everything.

Instead of rushing into:

* shell execution,

* repository mutation,

* terminal orchestration,

* autonomous workflows,

we focused on:

* canonical architecture,

* anti-duplication governance,

* reconnect lifecycle integrity,

* trust-state management,

* rollback discipline,

* passive-only enforcement,

* operational evidence,

* compliance validation.

One of the biggest discoveries during the audit phase was that the real danger wasn’t lack of features.

It was entropy.

Duplicate runtimes.

Duplicate reconnect logic.

Legacy entrypoints.

Fragmented storage models.

Experimental drift.

So before scaling the plugin ecosystem, we paused and built:

* a Canonical System Blueprint,

* a Compliance Gate framework,

* a Shared-Core plugin runtime,

* and strict passive-only enforcement boundaries.

The result:

A unified VS Code / Cursor plugin architecture with:

* single reconnect lifecycle,

* single heartbeat lifecycle,

* single trust-state model,

* single storage authority,

* compliance validation,

* migration checkpoints,

* isolated lifecycle testing,

* revoke/offline/degraded-state validation.

Most importantly:

the system remains intentionally non-autonomous.

No hidden subprocesses.

No shell execution.

No workspace mutation.

No repo scanning.

No terminal control.

Just a governed trust-connected runtime designed for long-term operational integrity.

This phase reinforced an important engineering lesson:

Feature velocity without governance eventually becomes operational entropy.

And in AI infrastructure, entropy compounds fast.

#AI #Architecture #PlatformEngineering #DevTools #Governance #SoftwareArchitecture #VSCode #Cursor #Engineering #CyberSecurity #Infrastructure #IuVeAI

Building AI systems is easy.

Building governed AI infrastructure is the hard part.

Over the last phase of IuVe Connect development, we made a deliberate architectural decision:

Not to build an “all-powerful AI agent” inside the IDE.

But instead to build a **passive, governed trust runtime**.

That distinction changed everything.

Instead of rushing into:

* shell execution,

* repository mutation,

* terminal orchestration,

* autonomous workflows,

we focused on:

* canonical architecture,

* anti-duplication governance,

* reconnect lifecycle integrity,

* trust-state management,

* rollback discipline,

* passive-only enforcement,

* operational evidence,

* compliance validation.

One of the biggest discoveries during the audit phase was that the real danger wasn’t lack of features.

It was entropy.

Duplicate runtimes.

Duplicate reconnect logic.

Legacy entrypoints.

Fragmented storage models.

Experimental drift.

So before scaling the plugin ecosystem, we paused and built:

* a Canonical System Blueprint,

* a Compliance Gate framework,

* a Shared-Core plugin runtime,

* and strict passive-only enforcement boundaries.

The result:

A unified VS Code / Cursor plugin architecture with:

* single reconnect lifecycle,

* single heartbeat lifecycle,

* single trust-state model,

* single storage authority,

* compliance validation,

* migration checkpoints,

* isolated lifecycle testing,

* revoke/offline/degraded-state validation.

Most importantly:

the system remains intentionally non-autonomous.

No hidden subprocesses.

No shell execution.

No workspace mutation.

No repo scanning.

No terminal control.

Just a governed trust-connected runtime designed for long-term operational integrity.

This phase reinforced an important engineering lesson:

Feature velocity without governance eventually becomes operational entropy.

And in AI infrastructure, entropy compounds fast.

#AI #Architecture #PlatformEngineering #DevTools #Governance #SoftwareArchitecture #VSCode #Cursor #Engineering #CyberSecurity #Infrastructure #IuVeAI

0 Comentários

·0 Compartilhamentos

·384 Visualizações

·0 Anterior